Still, this is a highly experimental tool at this stage with a lot of questions about the appropriateness of using such "AI" assistant tools for research, so use it with caution! This will allow you to extract a lot more information such as null hypothesis, methods, limitations etc at least in theory. Still, it is important to check against the abstract to be safe.įinally, Elicit currently only has access to titles and abstracts and the obvious next step is with full text. The authors of the tool claim to have improved on this issue by doing a sequence of fine-tuning with high-quality datasets but they “haven’t fully solved the problem yet”.įor example, if you select a column for “Country”, the column values are often correctly left blank because the abstract does not include the country of study (at least not directly). Language models are often known to “hallucinate” or make up answers, but in this case, I have seldom seen it happen. That said this is in early-stage beta and one must be careful not to fully trust the results. This is particularly so if you are engaged in systematic reviews (the pre available columns for “population” “intervention”, “outcome” hints at this). The fact that it uses GPT-3 to good effect to “read” and extract facts from the abstract to create a research matrix of papers is a compelling idea. Having tried many Q&A (question & answer) academic search engines (e.g., those based on the CORD-19 dataset to answer COVID-19 questions), this is the first one to ever impress me as potentially useful. Instead of just giving you documents that are highly relevant, it tries to extract from the document and give you the answer directly. Librarian’s Take: Elicit is a type of search engine that is sometimes called Q&A (Question and answer) engine. You can of course download this table as csv or bib format once you are happy with the result. No doubt more fine-tuning is needed here. The results are not perfect but it did manage to correctly extract the gender of participants for 3 of the 5 cases. In my example, I tried asking Elicit to extract the gender of the participants in a separate column. Still not impressed? You can even ask Elict to extract custom columns of data!. Type of paper (Randomised Control Trial, Review, Systematic Review, Meta-analysis)īut also characteristics of the paper itself such as:.

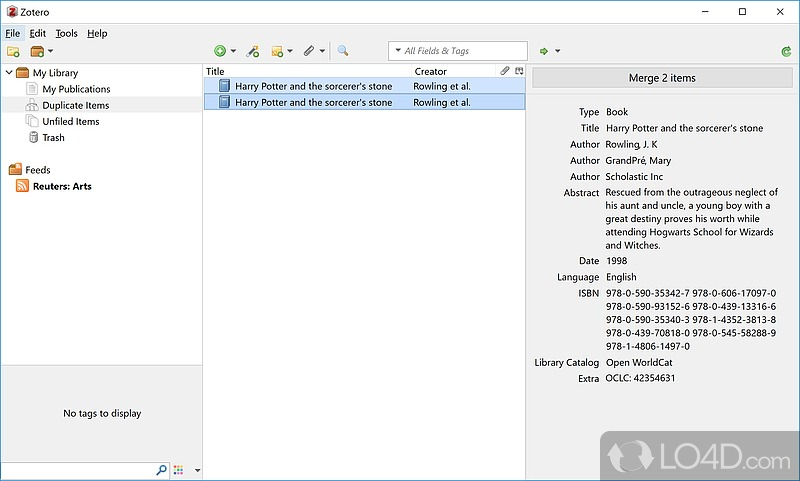

More importantly, Elicit allows you to create other columns of information and as a default, it suggests columns for not just paper related metadata like:

You can also click on the “Filters” icon to filter by year range, type of paper (Randomised Control Trial, Review, Systematic Review, Meta-analysis) as well as keywords in the abstract. You can narrow down your search further by clicking on the “Start” icon of each result that you find relevant and it can find other similar papers (In my example, I did not specify exactly what ‘health’ means, so you can further narrow it down using this method). In the toy example below, I enter “Does Mindfulness affect health” and as you can see below it not just gives me papers it thinks are relevant but summarises the abstract with a sentence that should in theory answer my question. For full details on how Elicit works, in terms of ranking models, fine tuning and more refer to - How elicit works. On the surface, looks like the run of a mill academic search engine that helps you find relevant papers, but looks can be deceiving.įirst enter your question or research statement into Elict and it finds not just the paper that may have the answer but “reads” and “summarises” the abstract and generates a one-line sentence summary of the abstract that answers your question. . One month later in December 2021, they announced functionality allowing you to fine-tune the model with your own training data.Į is one of the first in the world to take advantage of this functionality by training GPT-3 on Semantic Scholar metadata (title and abstract). Late last year, OpenAI made GPT-3 available as an API open to all without a waitlist and if you are curious, you can try it out. People were amazed by GPT3’s general ability to do code completion (e.g., OpenAI’s Codex model which is the basis for GitHub Copilot), write stories, carry out conversations and more with nothing but a few or no prompts. One of the largest language models at the time created (175 billion parameters) using deep learning, it exhibited state-of-the-art results on various machine learning test suites.

You may have read about OpenAI’s Language model GPT-3 that took the world by storm in 2020. Starting from this issue of ResearchRadar, Aaron will keep you updated on some of the newest and hottest research tools of interest in a regular feature, The bleeding edge.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed